After several reboots hoping things would fix themselves, I tried an emergency network reset. While things were broken, the host in question could not see any of its NICs - ifconfig was correct, but xapi was broken. I had a network issue the other day which resulted in one host disconnecting from a pool for several hours. Can someone help with this? Maybe post their NIC parameters using ethtool-k or pif-param-list? Internet search reveals other users also getting this magic 300Mbps and fixing by offload parameter tuning.

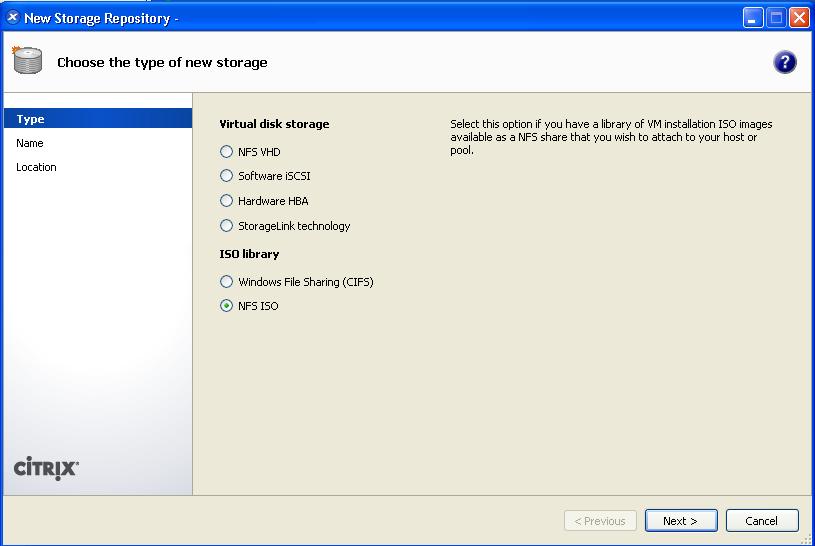

I also ran iperf3 between OPNsense VM and my computer, still same 300Mbps max. Note that this interface is also the xcp management interface. Also disabled auto neg, set speed to 1000. I have played around with all offloading parameters by doing pif-param-set command. When I run iperf between XCP host and my computer I get only about 300Mbps on both directions. So the host - VM network performance is fine. The interface between the host and OPNsense VM is via xcp virtual networking and I’m able to get speeds (both up and down ran using the -R flag) up to 2 Gbps. I ran iperf3 between the host xcp and guest OPNsense. All the interface checksum offloading is disabled via OPNsense gui checkboxes (enabled checkbox). I have been playing around with them for a while now. I followed all the guide available on internet to on/off tx, gro, … and all offload parameters. Before doing that I wanted to setup right and do performance test and tuning. I will soon get a managed switch to setup Vlan to switch LAN and WAN into the OPNsense. The host desktop has only one Ethernet NIC 82579LM which supports gigabit connection. does someone have any pointers or has maybe run into this aswel ? Trying to remove the lun says it is still in use, lvmdisplay / pvdisplay show nothing. Xenserver detects the lun's and I can create an SR and connect the lun but after rebooting a xenserver the lun doesnt come back up. The other issue is related to storage, both servers are connecting to 1 lun on an emc storage. Is a bond needed for proper failover or can we work without a bond (will also need a vlan configured for citrix traffic, can i connect a vlan to 2 seperate nics ?) ? I have checked the switches and the configurations for all 4 ports are the same. Im running into problems with network timeouts as soon as I create a bond (active-passive) of the 2 10gbe nics, im not sure if 1 of the nics also being the management nic is causing problems in the bond (?) but it happens on both xenserver installs, both fresh installs. The 2 dell r740 are connected over the 2 10gbe connections to 2 seperate switches and the storage is connected over HBA fiber (also 2 seperate fiber switches).

#Xen server vdi not available install

If you need further assistance, please do not hesitate to contact us.Have been trying to install a new xenserver pool with 2 members installed with xenserver 8.2cu1 but having problems with networking and storage. We were not able to access the other two hosts using Xencenter, when the master node went unresponsive and hence by making a slave node as master. The above fix was applied in one of our client environments where three Xenservers were running in a pooled environment with Xen version 5.6SP2 installed in it. Then, re-establish a connection to the slaves: * xe pool-emergency-transition-to- master If the pool master is entirely down, you can execute the following command in one of the slave nodes to convert it into master. * xe pool-designate-new-master host-uuid = “UUID of the slave host” * xe host-list – List UUID for all the hosts

* xe pool-ha-disable – Disables HA (High Availability) Note: Before changing the pool master, you have to disable the HA feature temporarily. We can execute the following commands to make a slave node as the pool master. At this time, if we are able to manually SSH into the pool master.

We will not be able to access the slave nodes and its corresponding VM’s from Xencenter. In Xenserver pooled environment, if the pool master node goes unresponsive due to some reason.